Probability for Machine Learning

- Author: admin-nextgen

- Posted On: September 1, 2022

- Post Comments: 0

Random variables hold values derived from the outcomes of random experiments. For example, random variable X holds the number of heads in flipping a coin 100 times.

A probability distribution describes the possible values and the corresponding likelihoods that a random variable can take. For example, the probabilities of having 0, 1, 2, …, 100 heads respectively.

Frequentist calculates probabilities from the relative frequencies of specific events to the total number of trials. For example, P(Head) = 0.56 if there are 56 heads out of 100 flips.

Bayesian modifies a prior belief with current experiment data using Bayesian inference. For example, a Bayesian can combine a prior belief that the coin is fair with the current experiment data (56 heads out of 100) to form a new belief (details later).

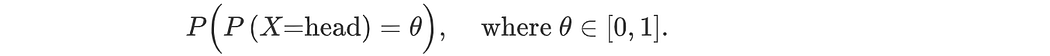

A Frequentist estimates the most likely value for P(head) (a point estimate). But a Bayesian tracks all possibilities with the corresponding certainties. This calculation is complex but contains richer information for further computation.

Sample space Ω contains all the possibilities of an experiment, e.g. {HH…, HT…, TH…, TT…, ….} in the coin-flipping experiment.

Event space contains all the possible subsets of Ω, e.g, {{HH…}, {HH…, HT…}, …}. In probability theory, we calculate the probability P(A) of event A in the event space. (e.g. A is an event in which it is all heads or all tails.)

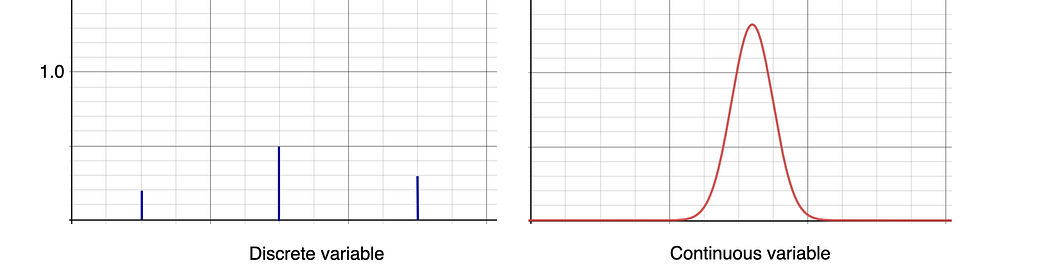

Probability mass function p(x) is the function P(X=x) which X is a discrete random variable.

Probability density function PDF f(x) is for continuous random variables. In continuous space, the probability is computed as

Unlike discrete space, f(x) can be larger than one. And P(X=x) equals zero.

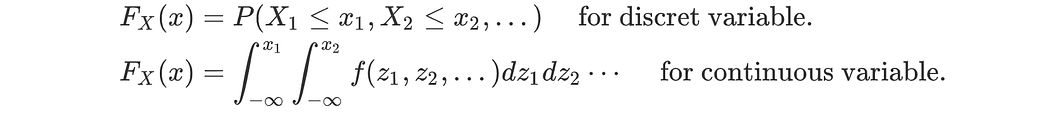

Cumulative distribution function CDF F(x) accumulates the probability values up to x, P(X≤x).

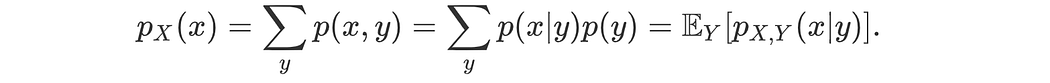

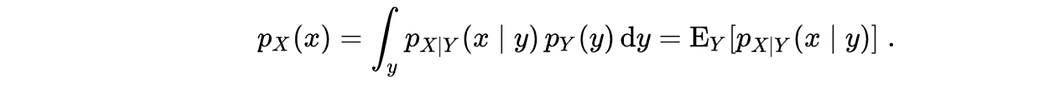

Marginal probability of x sums (or integrates) a joint distribution over variables other than x. In the discrete space, it is

In the continuous space,

i.i.d.: A collection of random variables is independent and identically distributed (i.i.d.) if those random variables have the same probability distribution and are mutually independent. For example, random variable X is sampled with replacement from the same population.

Notation

Rule on Probability

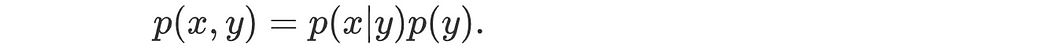

Product rule:

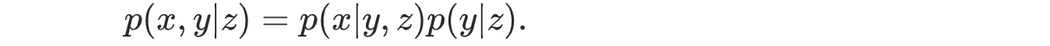

And,

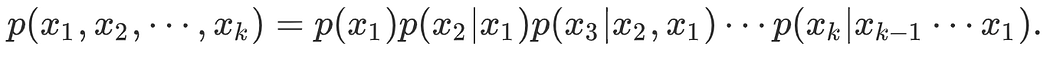

Chain rule:

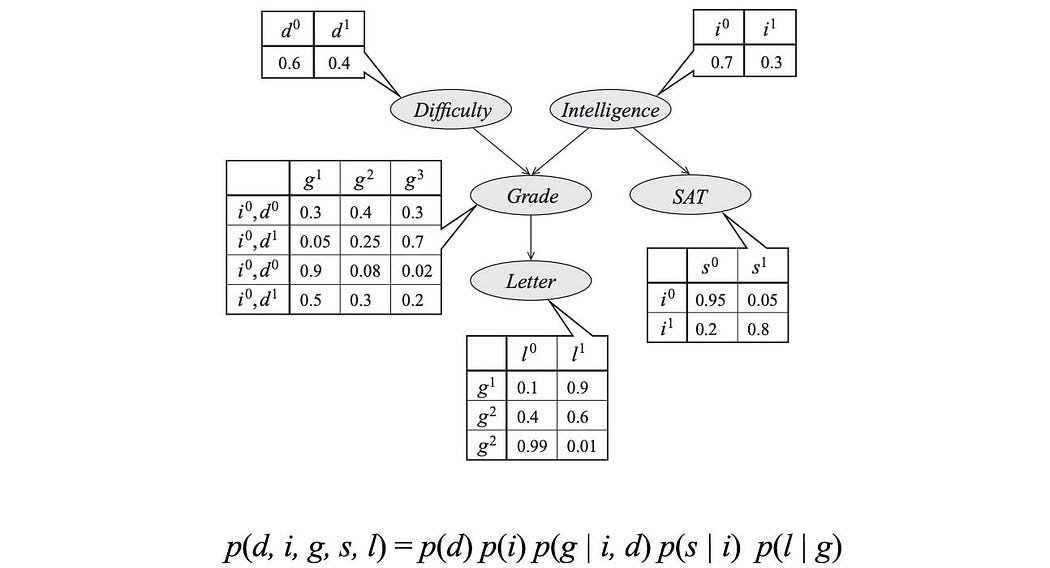

Using a graphical model, we can further simplify the R.H.S. factorization above. For example, in the model below, p(l | d, i, g, s) = p(l | g) since l is independent of s ( l ⊥ s) and the information in “Grade” is sufficient to derive whether you will get a good “recommendation letter” (l ⫫ d ⫫ i | g ).

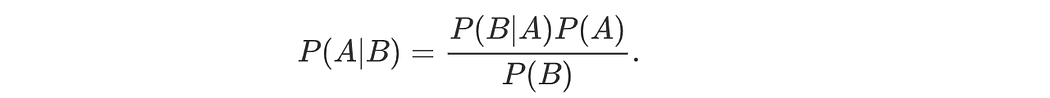

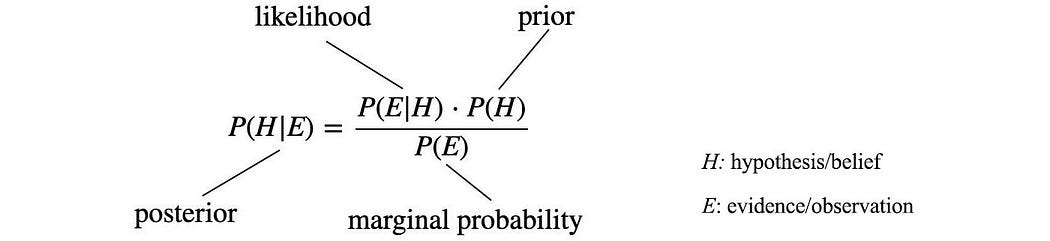

Bayes’ Theorem

Let’s say symptom B happens in all patients having some rare cancer A. But for cancer-free people, only 0.01% of them will have this symptom. So for people having this symptom, how worried should they be? Bayes’ Theorem surprises us that there is only a 9.1% chance that they have this cancer.

Intuitively, even a tiny portion of a large population is much greater than a large portion of a tiny population. Cancer-free people having the symptom are much higher than patients with this rare cancer.

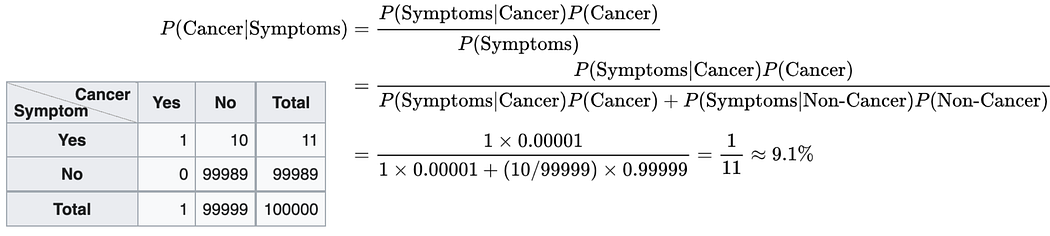

Bayesian inference

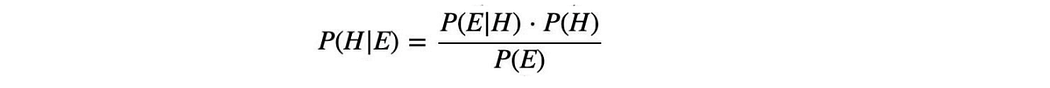

In Bayesian inference, these terms are labeled as:

So given

- a prior on a belief,

- the likelihood of the evidence (observed data) given the belief, and

- the probability of the evidence P(E),

we can compute the posterior P(H|E). This forms our new belief given the newly observed data.

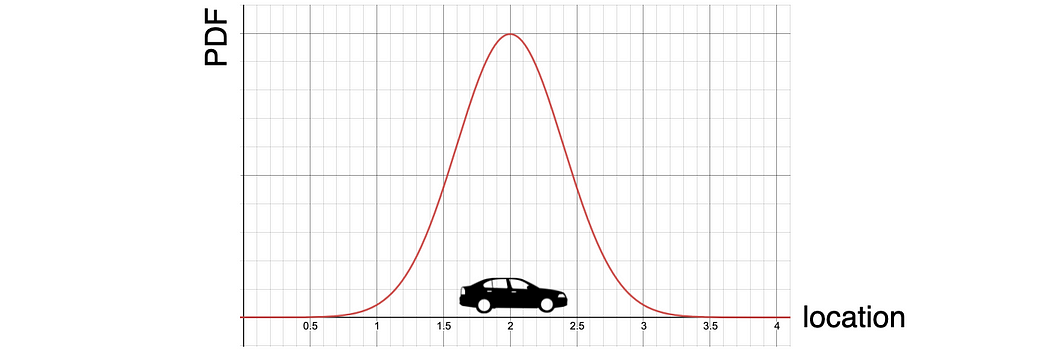

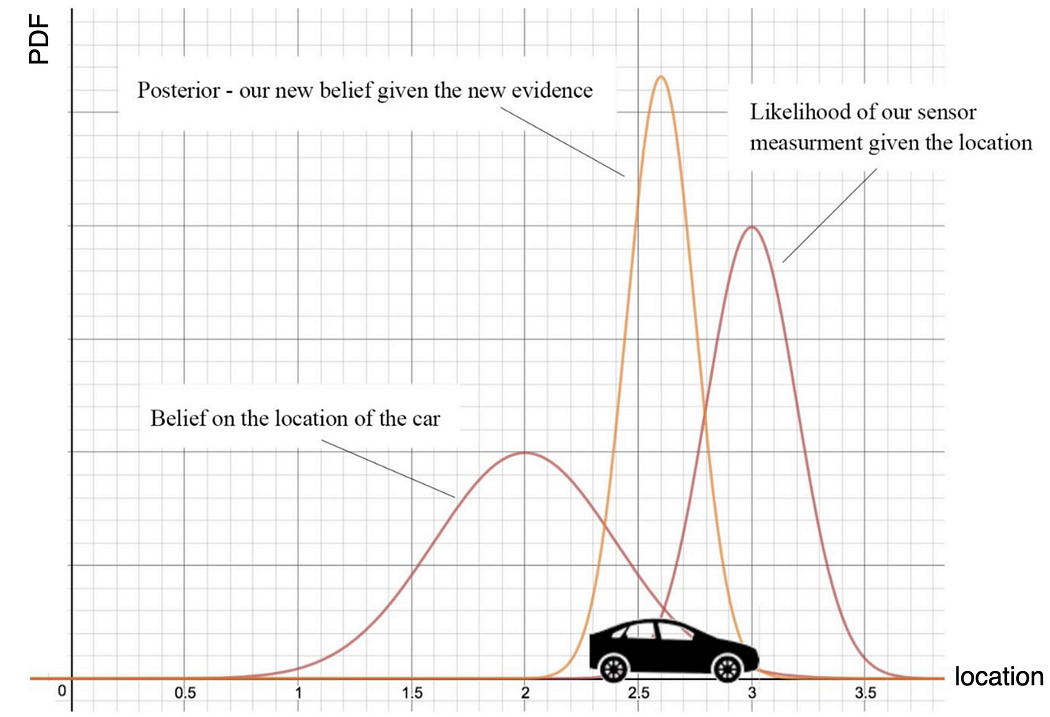

Let’s elaborate on it with an example of estimating a car location. In Bayesian, we track the probability distribution over all possible values on H. Say, the prior belief P(H) of the car location is

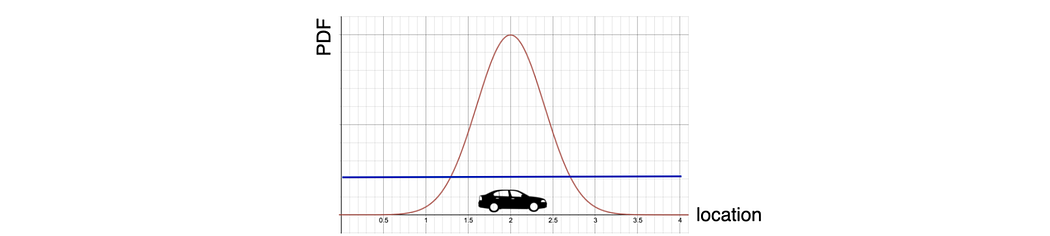

If it is unknown, we can start with a uniform distribution, like the blue line below. i.e. any guess is equally possible within a certain range.

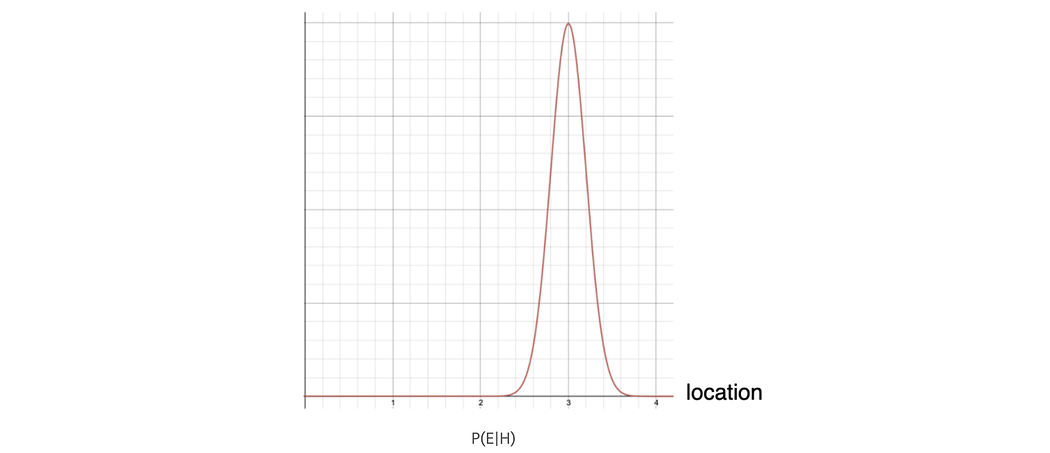

Next, we collect sensor measurements to estimate the present location of the car. A model, say provided by the sensor vendor, can be used to compute the likelihood P(E|H). The diagram below is the calculated likelihood of the measurements over all possible locations.

In Bayesian inference, P(E) normalizes the numerator to form a probability distribution. It is computed with the marginal probability that integrates over all possible h.

With all three terms in the R.H.S. of Bayes’ Theorem ready, we compute the posterior, P(H|E).

This is the new belief of the car location given this new evidence. For the next time iteration, we use it as the new prior.

However, in general, the integration in the marginal probability is intractable. But there are approximation and sampling methods to address the problem.

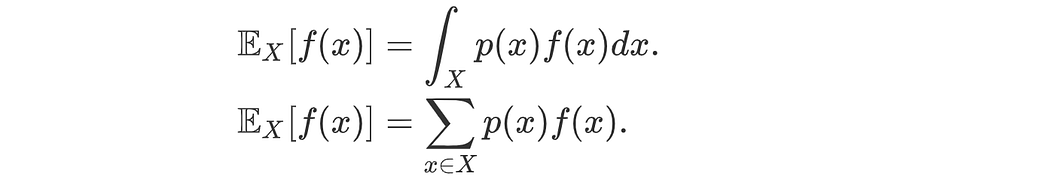

Expectation, Variance, and Covariance

Expectation

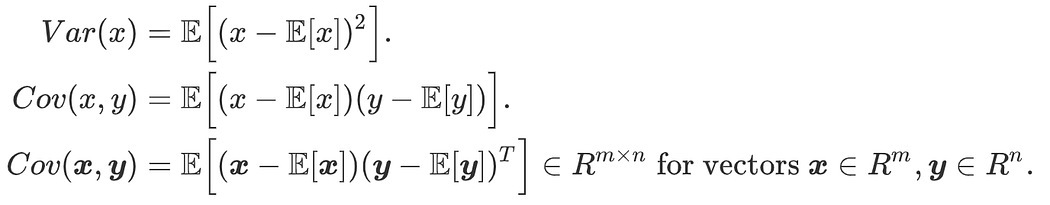

Variance & Covariance definition

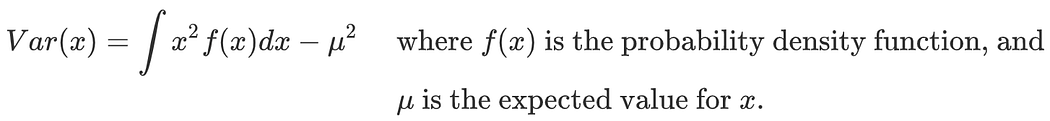

For a continuous random variable,

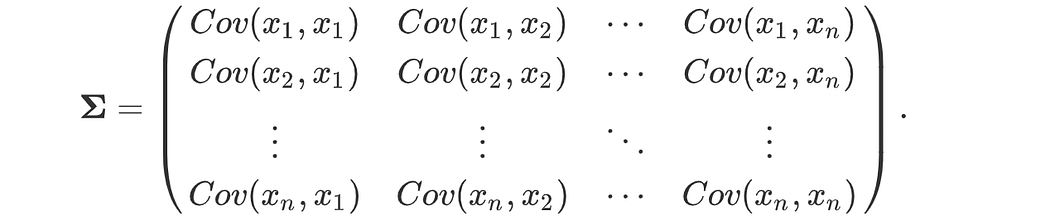

The covariance matrix is the variance of vector x, and var(x) = cov(x, x):

Correlation normalizes the covariance, i.e. corr(X, Y) ∈ [-1, 1].

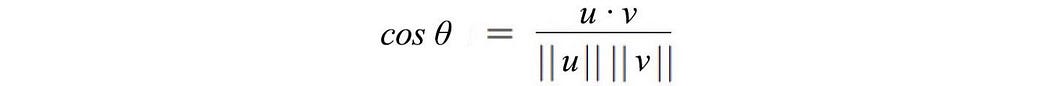

To conceptualize the geometric properties of random variables, let’s define the inner product of two random variables as a covariance.

Then, the cosine of the angle between these two random variables is the correlation.

Properties on expectation, variance & covariance

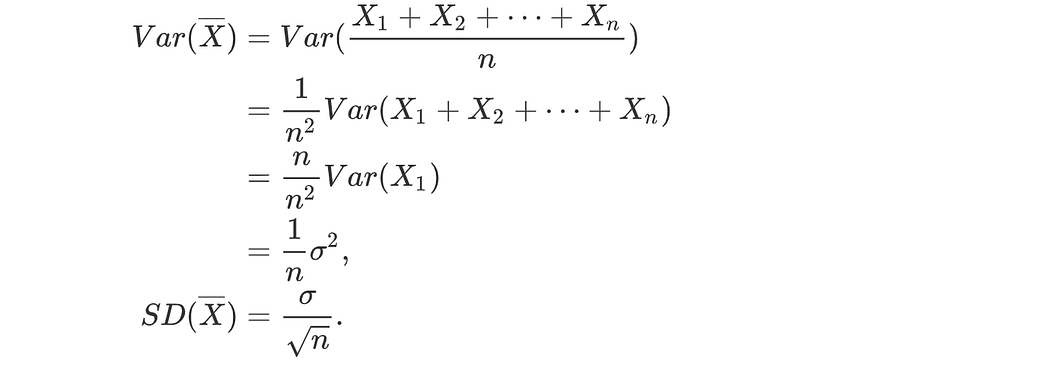

The standard deviation of the Sample Mean

Define the sample mean on n random variables as

Given the variables are sampled independently from the same distribution with variance σ², what is the standard deviation of the sample mean?

This is the foundation on why the parameters in fully connected layers are normalized with Xavier or He weight initialization.

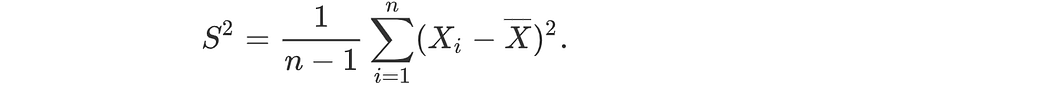

Sample variance

The mean of the sampling data is correlated with the data itself. The sum of (xᵢ-μ)² will be smaller than that of the general population. The estimated variance will be biased. To correct the bias, the sample variance is estimated as:

Statistical Independency

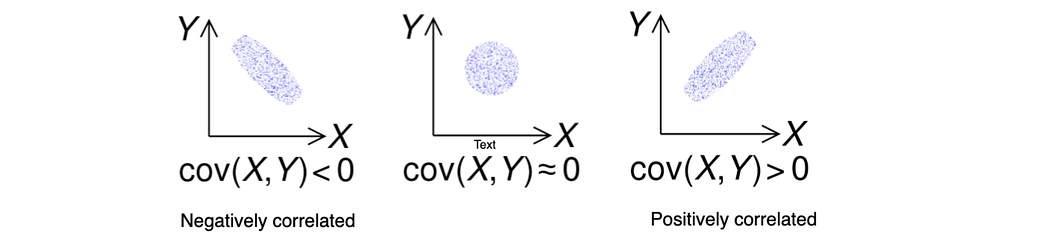

Covariance can be used to measure how two random variables are correlated.

If two random variables x and y are independent, the following conditions hold:

Note: Cov(x, y) = 0 is a necessary but not a sufficient condition for independence. Random variables that are nonlinearly dependent could have Cov(x, y) = 0.

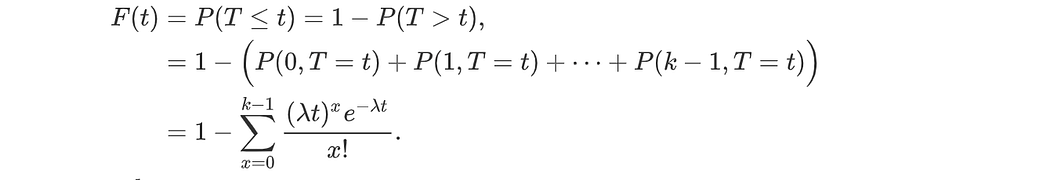

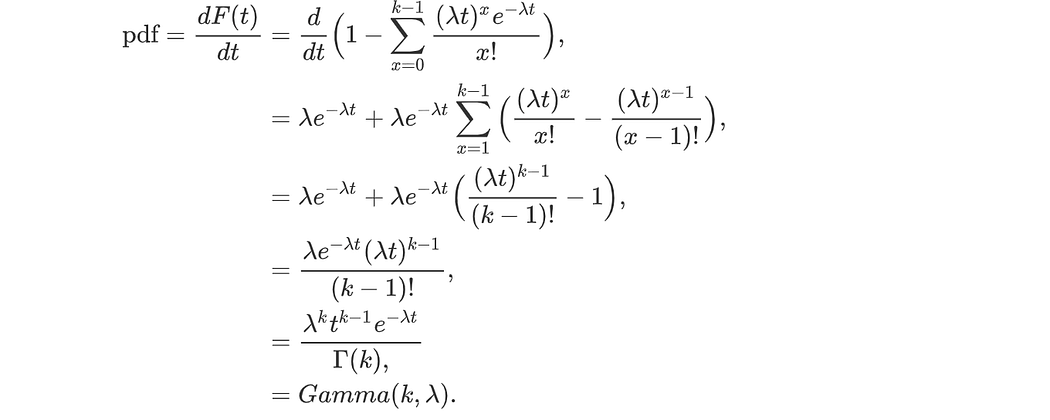

Derive PDF from CDF

Given a cumulative distribution function F(x) = P(X≤x), we can differentiate it to get the probability density function. Let’s have an example. Poisson distribution models the probability of having k events within time t. The probability density function is defined as

So what is the probability density function on the wait time for the kth event? The CDF on the wait time for the kth occurrence is:

Its derivative is:

So the wait time for the kth event is gamma distributed.

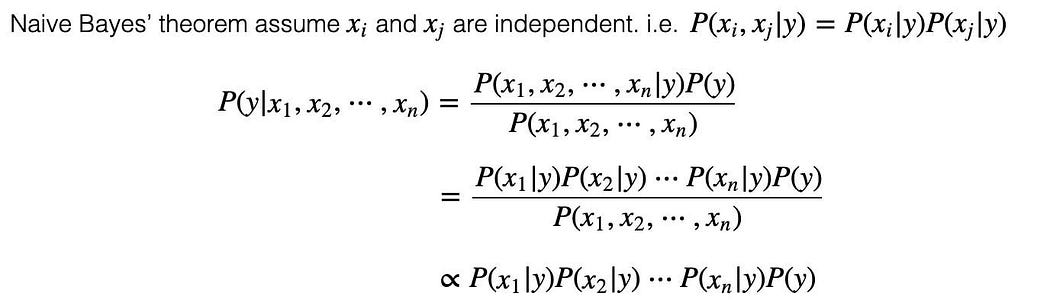

Naive Bayes’ theorem

Bayes’ theorem solves P(y|x) when P(x|y) is easier to model. We compute P(y|x₁, x₂, x₃, …) through P(x₁, x₂, x₃, …|y). However, the joint conditional probability P(x₁, x₂, x₃, …|y) remains too complex. The exponential combination of x₁, x₂, …, xn makes it too hard to collect data to estimate it.

Naive Bayes’ theorem simplifies P(x₁, x₂, …|y) into P(x₁|y)P(x₂|y)…P(xn|y) by assuming x₁, x₂, x₃, … and xn are independent of each other.

Even it may be false that variables in the Naive Bayes algorithm are completely independent of others. But empirically, this remains a valid simplification in solving many problems.

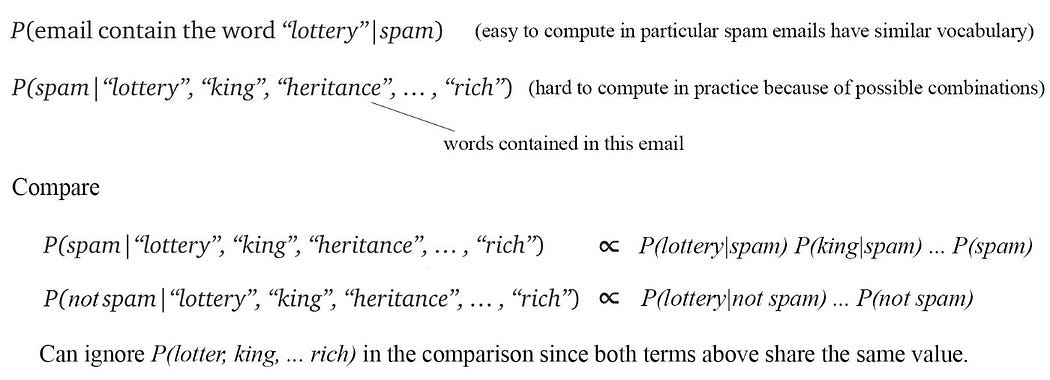

Let’s demonstrate the idea by detecting spam emails. People mark their spam emails. Therefore, P(w₁,w₂, …|spam) is much easier to model. With Naive Bayes’ theorem, it further simplifies to P(w₁|spam)P(w₂|spam)… By computing the corresponding values below, we decide whether the “spam” or “not spam” will score higher.

Similarity

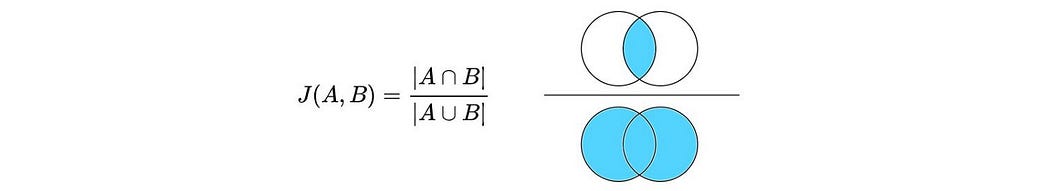

Jaccard Similarity

The Jaccard similarity measures the ratio between the size of the intersection over the size of the union.

Cosine Similarity

Cosine similarity measures the angle between two vectors.

Pearson Similarity

Pearson correlation coefficient ρ measures the correlation between two variables.